They simply don’t have the time to test it

Leading AI labs have been churning out models and features left and right for the past couple of years, that’s because they consider it an arms race.

Anthropic in particular has been speeding up recently with Claude Code and ecosystem around it.

Code, Cowork, Excel, Chrome extension… and then for Claude Code itself they started creating more sophisticated features: Remote control, Dispatch, and now–Design

Did you keep the count?

It is understandable, they race to grow to a trillion dollar valuation. But such speed comes at a cost.

They want users to use their ecosystem, but it starts to crack like drying clay. Because the foundation is not properly cured–they simply don’t have the time.

Or maybe–and that’s a bit controversial–their coding agents can’t keep up with churning out so many features. Maybe their developers can’t keep up with the coding agents?

Whatever it is, stable and solid product is part of the brand. And after they sped up it started to erode.

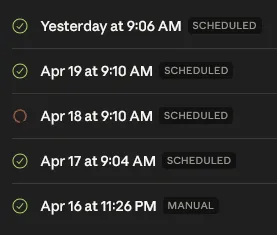

Why would I use their Routines (yes, another recent feature) when they aren’t guaranteed to work? I gave it a spin, created a simple daily recurring task. It failed at least once with no reason apart from infinite spinning:

Meanwhile on my own server, task scheduler written in Python (by Claude) runs consistently for several weeks now.

Yes, I know, different scale and complexity. I also know that my setup isn’t valued at multiple billions.

I have been hard on OpenAI and was “praising” Anthropic. I really enjoy their model, even with their weekly quotas. But credit is where credit due: OpenAI doesn’t have multiple outages in a month. And they have a sizeable audience as well.