Don't start with ChatGPT, start with the map

One of the “sins” of the sprawling new disciplines is vague terminology: different people come up with different names because the space is not yet occupied and the industry is still very young and growing rapidly.

Take “AI” for example: nowadays it is large language models and specifics all around them. Tokens, context windows, hallucinations…

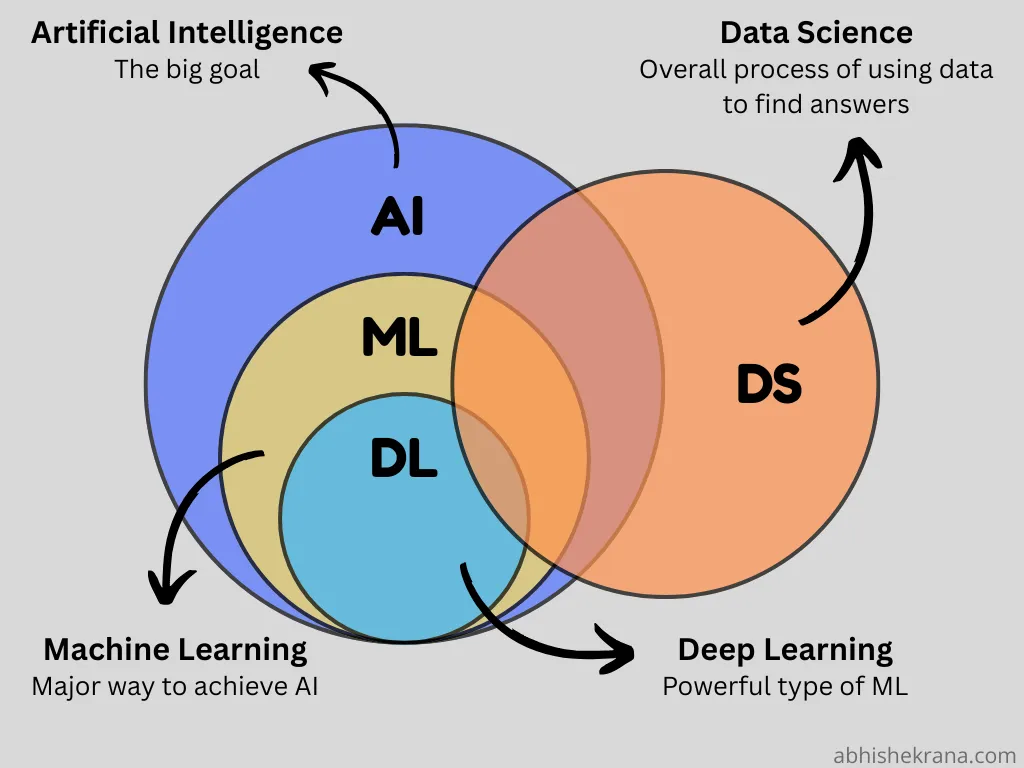

In fact, the term AI itself is confusing because it is just too broad and undefined. In computer science and engineering classes–when teaching machine learning–the following diagram is used: deep learning (DL) is placed as a subset of machine learning (ML), which itself is a subset of artificial intelligence (AI). It looks like this:

Similarly, nowadays people throw the terms all over the place—on YouTube, in blogs or chats, podcasts, wherever... Problem is this does not help the novice understand the field better—it makes it harder and more confusing.

How do I know? Because I was at the same point 9 years ago, reading about loss functions, cost functions and error functions. And in the end finding out that these words mean the same thing.

Therefore, I would advise against starting learning “AI” from ChatGPT. Sure, it is a good first introduction to what technology is capable of and to make yourself excited. But it is a poor choice to actually understand the technology and science behind it. And no, learning how words are split into tokens doesn't really cut it, even if it is important. It simply doesn't scratch the surface.

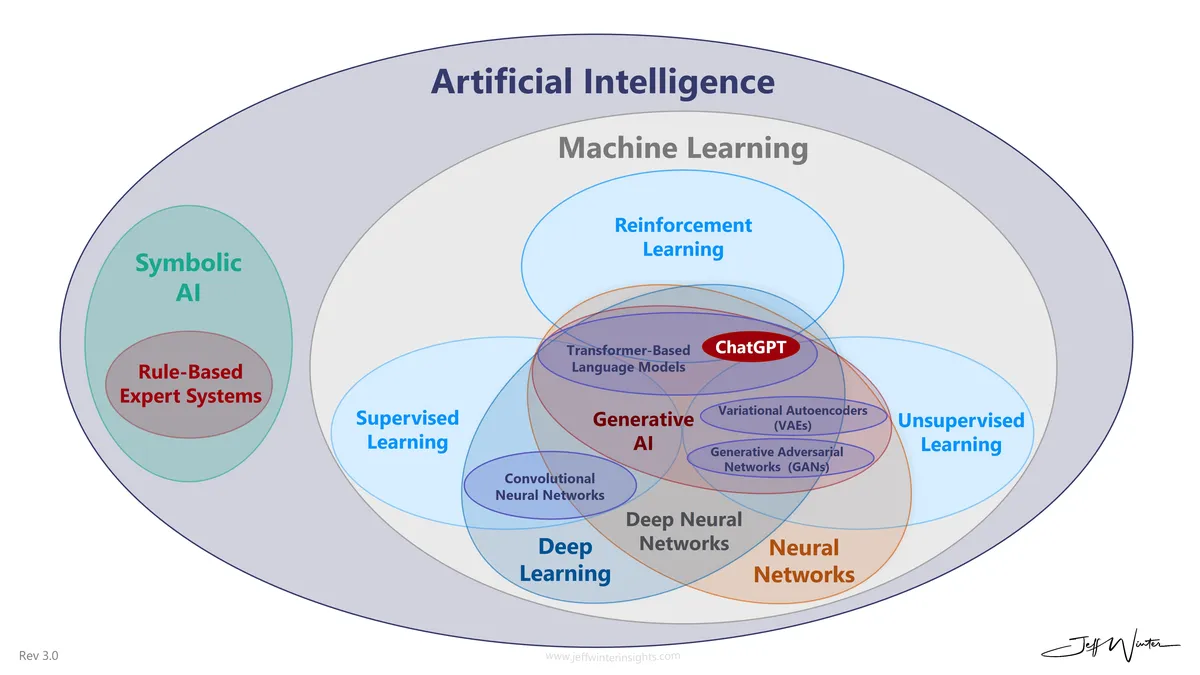

What I propose is a different approach to learn: start with a “map” of concepts in the field and their relationships between each other. Then, unfold this map. Here is what I mean, this is roughly what the field of AI is currently comprised of:

It might look confusing at the first glance, but we can trace a hierarchy of concepts. For example:

- Artificial intelligence ↓↓↓

- Machine learning

- Deep neural networks

- Generative AI

- Supervised learning

- Transformer-based language models ↑↑↑

Now, after untangling this chain of concepts you can start from either side: you can learn about language models, how tokenization works and how they are trained. But don't get surprised that any of these aspects won't touch upon the core fundamentals of how neural networks and machine learning in general works — because that's the other end of the chain.

If instead you are interesting in learning the fundamentals of how neural networks work — start from the beginning of the chain. The hardest part about learning is not getting the information, it is mapping out the numerous concepts in your mind.

And that's where systems thinking helps — not just for work, but for learning.